Unsurprisingly, simply “using AI” in your compliance program is no longer an automatic competitive advantage – it’s the baseline. Our 2026 State of Financial Crime survey showed that the majority of firms (93%) are either using standard AI for customer screening now or evaluating it. AI is no longer the future; it’s table stakes.

However, there is a clear execution gap. While 93% have adopted standard AI, only 33% are currently utilizing the more advanced agentic or predictive capabilities that actually solve the manual workload problem.

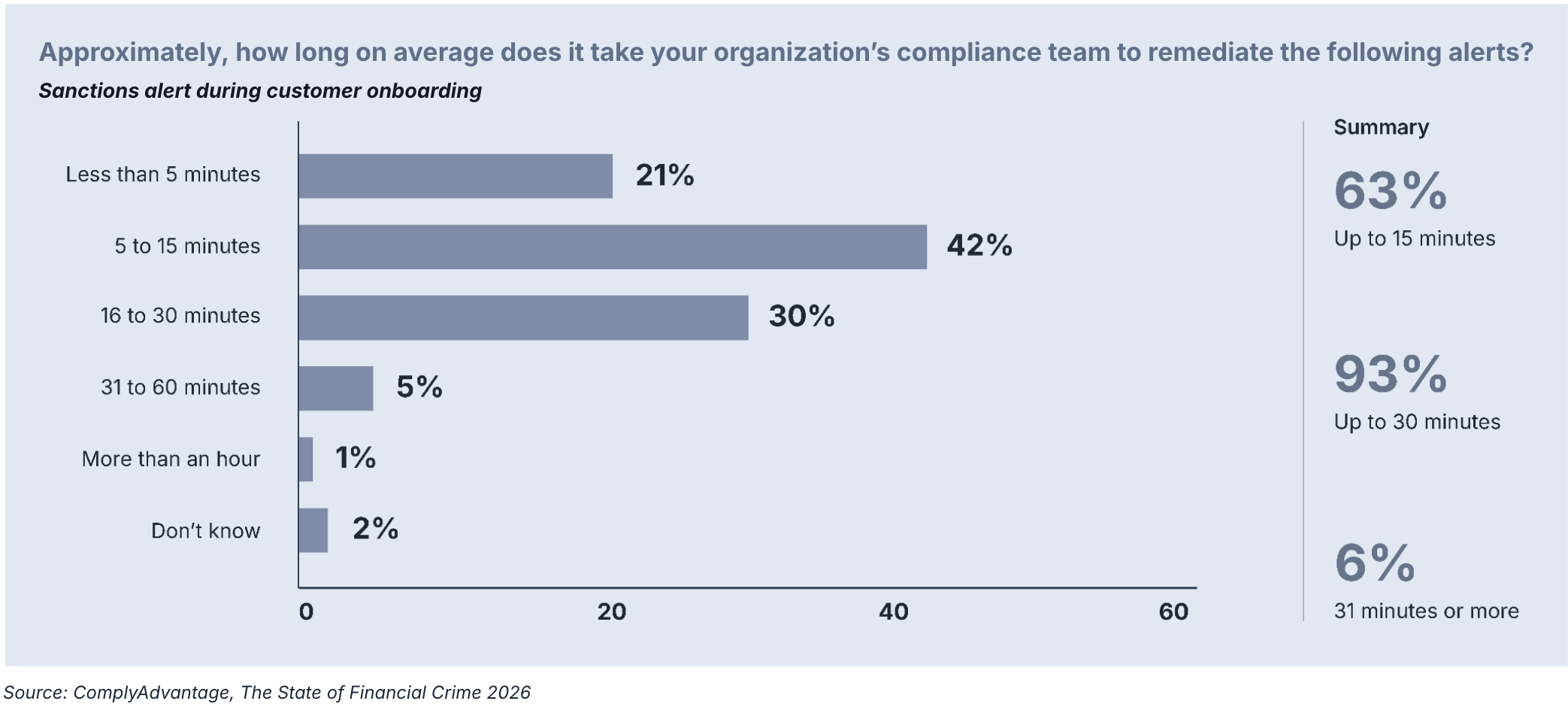

This disconnect explains why we haven’t seen a universal collapse in remediation times. Currently, 42% of firms say they typically spend five to 15 minutes remediating a single sanctions alert. For almost a third (30%), the number rose to an inefficient 16 to 30 minutes per single politically exposed person (PEP) or adverse media alert during onboarding.

If a financial institution is receiving only 200 screening alerts each day – significantly fewer than reported by some sources – a mere 15 minutes per alert would require 50 working hours per day to effectively clear the list. This means that, at a minimum, an effective team would require over six analysts exclusively dedicated to reviewing sanctions alerts, without accounting for breaks or other customer screening alerts.

Any backlog would result in ever-increasing loads on a team that would never be able to keep up. Even assuming the institutions seeing still longer times are merely in transition to something more efficient, these numbers are problematic.

Are customer screening processes scalable?

The traditional solution to the manual remediation drain was to hire proportionally more personnel as needs grew. But our survey shows that most firms are consistently looking for greater efficiency in their compliance processes. For firms looking to scale, it’s imperative that the cost of anti-money laundering (AML) compliance not multiply in lockstep with a growing customer base.

For firms struggling to move past these 15-minute resolution times, the challenge often lies in how their AI is integrated. If an AI solution is “bolted on” as a separate layer rather than part of an AI-native stack, it often lacks the deep access to the context needed to actually resolve a case. It might generate a high-quality alert, but if it can’t synthesize the reasoning or gather the supporting evidence, the analyst still has to do the investigative legwork” from scratch. Unlocking true scalability requires moving beyond tools that merely highlight problems to an integrated architecture that helps solve them.

As we’ve seen among this year’s respondents, standard AI has already solved some operational inefficiencies: instead of static rules, alerts are based on more dynamically interpreted customer data. Yet these solutions are still one-dimensional: the tool’s job is to generate an alert, full stop. From there, the rest of the investigation is up to human analysts, and that multi-step process takes time. Reducing alerts alone may not be enough.

Glossary

Agentic AI describes AI models that are equipped with agency: the ability to plan, reason, and execute tasks in pursuit of objectives. Unlike ‘standard AI’ systems that are limited to a single output (eg. a model that flags a transaction as suspicious based on a pre-set rule), agentic AI can interact with external tools, adapt behavior based on context, and operate iteratively until the desired outcome is achieved. An example of agentic AI is the auto-remediation of level 1 alerts – where the AI not only identifies a low-risk alert but also independently gathers the necessary data, drafts the closure narrative, and closes the case without human intervention.

To fully scale, firms need to look beyond merely controlling alert numbers and accuracy to the investigation process itself. Each investigation requires a number of definable steps with contingency points. Instead of leaving every step to a human, identify which elements can be automated with AI agents, freeing analysts to investigate more efficiently.

Closing the bottleneck with agentic AI

Agentic AI is like a virtual team that acts behind the scenes. It isn’t limited to deciding when and why to generate an alert. Instead, multiple specialist agents can communicate with each other and other system tools to complete entire workflows. This can dramatically improve a customer screening team’s operational workload when implemented responsibly and based on well-researched best practices.

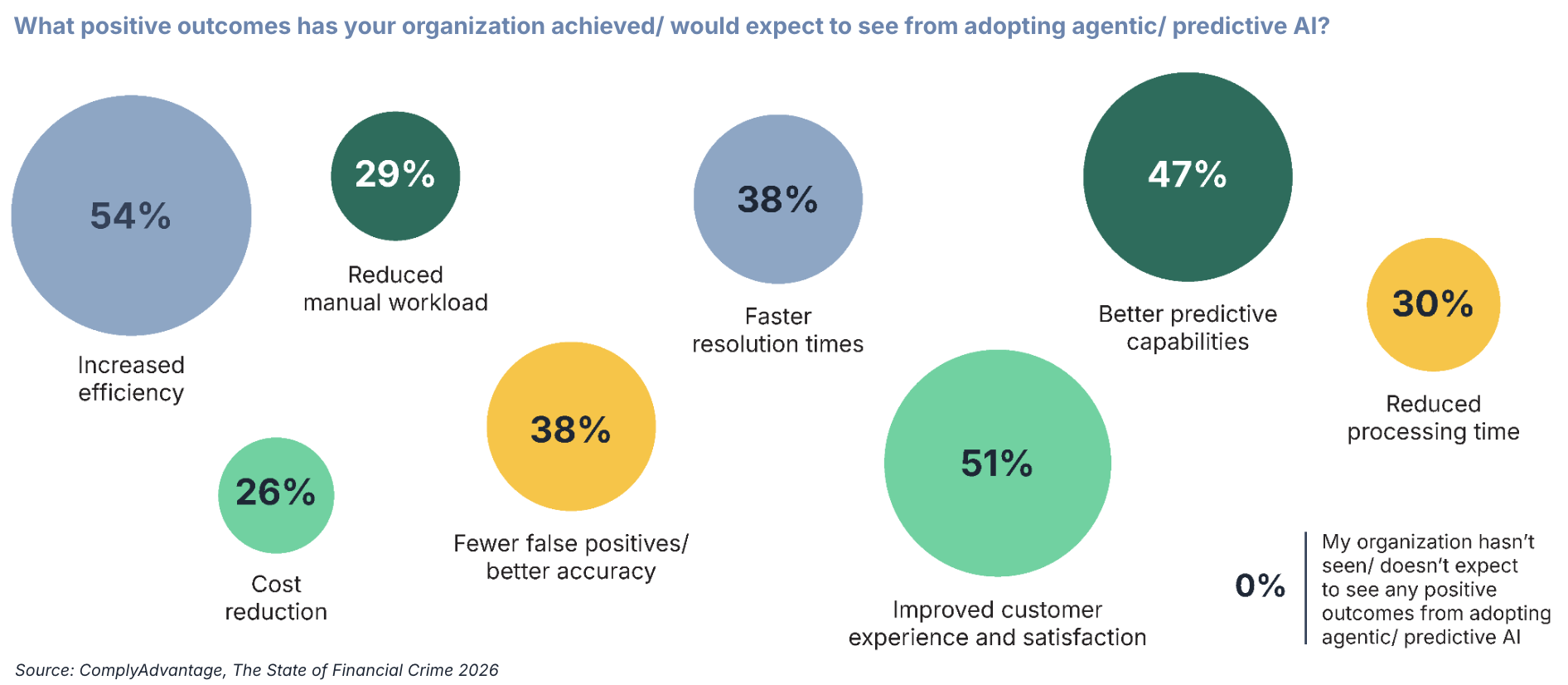

Our survey respondents were clear that agentic AI is, or is expected to be, an asset. Here are some of the advantages they reported for both agentic and predictive AI:

- Over half expect or experience improved efficiency (54%) and better customer experience (51%).

- 38% expect or experience faster resolution times combined with better accuracy.

- Over a quarter (26%) expect or experience reduced costs thanks to agentic or predictive AI.

- Just under a third expect or experience reduced processing time (30%) and manual workload (29%).

When looking at what kinds of work agentic AI can take off of teams’ plates, the reasons for these responses become clear. In ComplyAdvantage’s AI-native screening workflow, the process is streamlined into a single, cohesive decision chain:

- Intelligent alert precision: The process begins with our high-performance matching engine. Rather than generating a mass of low-quality alerts for a triage team to sort through, the system uses machine learning to evaluate every match against data points like name rarity and date of birth. This automatically resolves the vast majority of obvious false positives before they ever reach a human.

- The auto-remediation agent: For the remaining matches that require closer inspection, an autonomous AI agent takes over. This isn’t a black box automation; the agent acts as a virtual member of the compliance team, operating within your existing case management workflow and following the specific risk logic and escalation thresholds your firm defines.

- Autonomous research and analysis: The agent performs the heavy lifting of a traditional Level 1 analyst. It deep-dives into the data – analyzing linked adverse media, inferred relationships, and PEP status – to determine if a match is a true risk or a false positive.

- Natural-language reasoning: Instead of passing fragmented notes between different systems, the agent synthesises its findings into a clear, natural-language narrative. It documents its full reasoning and the underlying logic in an immutable audit log, ensuring every automated decision is as transparent and defensible to a regulator as one made by a human.

- Human-in-the-loop augmentation: If the agent encounters a complex case that falls below your pre-set confidence threshold, it escalates the alert to your human experts. The analyst doesn’t start from scratch; they receive a structured case file with all the necessary evidence and narratives already prepared.

By handling 65-85% of false positives autonomously, the system allows teams to focus their expertise on the highest-risk entities. What used to take minutes or hours of manual research now happens in mere seconds, delivering more thorough, consistent, and audit-ready results.

Move toward a human + agentic compliance model

ComplyAdvantage Mesh provides 24/7 automated case remediation, freeing compliance teams from low-risk tasks and escalating only ambiguous cases, ensuring human expertise is focused on issues that require intuition and moral judgment.

Get a demo

Originally published 26 March 2026, updated 26 March 2026

Disclaimer: This is for general information only. The information presented does not constitute legal advice. ComplyAdvantage accepts no responsibility for any information contained herein and disclaims and excludes any liability in respect of the contents or for action taken based on this information.

Copyright © 2026 IVXS UK Limited (trading as ComplyAdvantage).